Artificial intelligence expert: ChatGPT is much dumber than people think

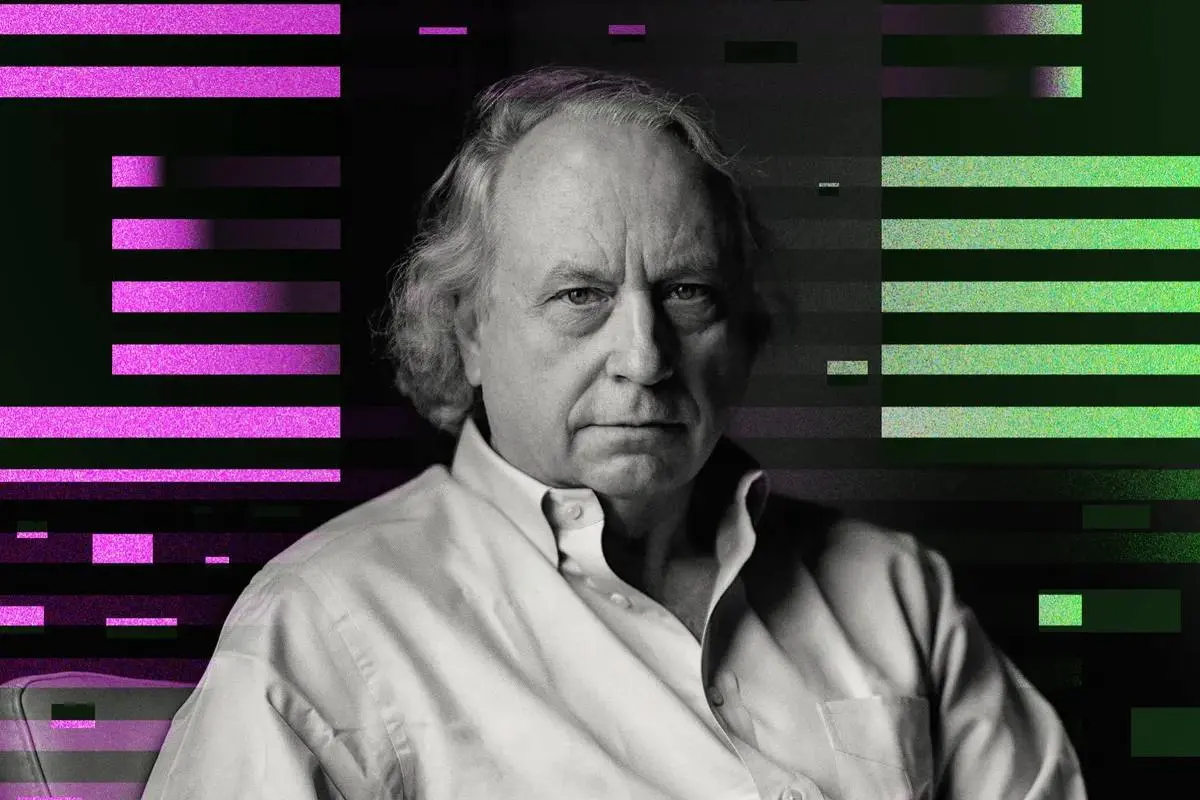

Rodney Brooks says large language models can only pretend to perform like humans.

Rodney Brooks, a robotics researcher and AI expert, says we have exaggerated the capabilities of OpenAIâs large language models, on which the ChatGPT chatbot is based.

In an interview with IEEE Spectrum Brooks argues that AI tools are much dumber than we think. Of course, this doesnât mean that the capabilities of AI technology should be written off as irrelevant to competing with humans in performing some tasks.

Basically Brooks asks whether AI is on the way to becoming a type of artificial general intelligence (AGI), whether it can achieve similar intellectual levels to humans and act like them.

Futurism writes that Brooksâ comments highlight the current limitations of AI and how easily these toolsâ output can be manipulated. In the eyes of this AI expert, chatbots like ChatGPT are designed to look human-like.

Brooks told IEEE Spectrum âWe can see what humans can do, and thatâs what we can judge other things against, but it doesnât mean that the performance generalizes deservedly to AI systems.â

In other words, although current large language models appear to be able to infer logical meaning, this is not the case.

Brooks says, ‘Artificial intelligence’s performance in providing natural tone of voice and differentiating responses is very good.’ The researcher says he has faced more problems when testing large language models to help with confidential coding.

In summary Brooks believes that artificial intelligence can reach a very impressive level of performance in the future, but we will not achieve AGI.

Given the dangers of artificial intelligence systems replacing humans Brooks‘ approach is likely to be better for many jobs in the future.